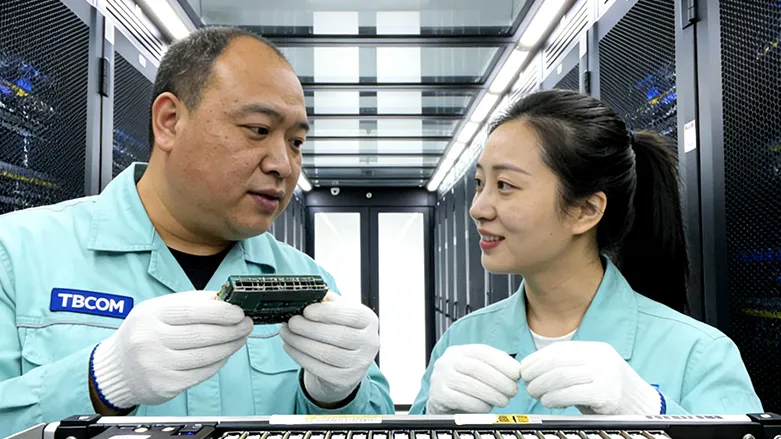

01

Short-distance high-density adaptation

Designed specifically for short-distance scenarios of ≤50m, the compact OSFP112 package is adapted to leaf-ridge networks, perfectly compatible with GPU clusters and direct server-switch connections, resolving the core contradiction of computing power-bandwidth mismatch;